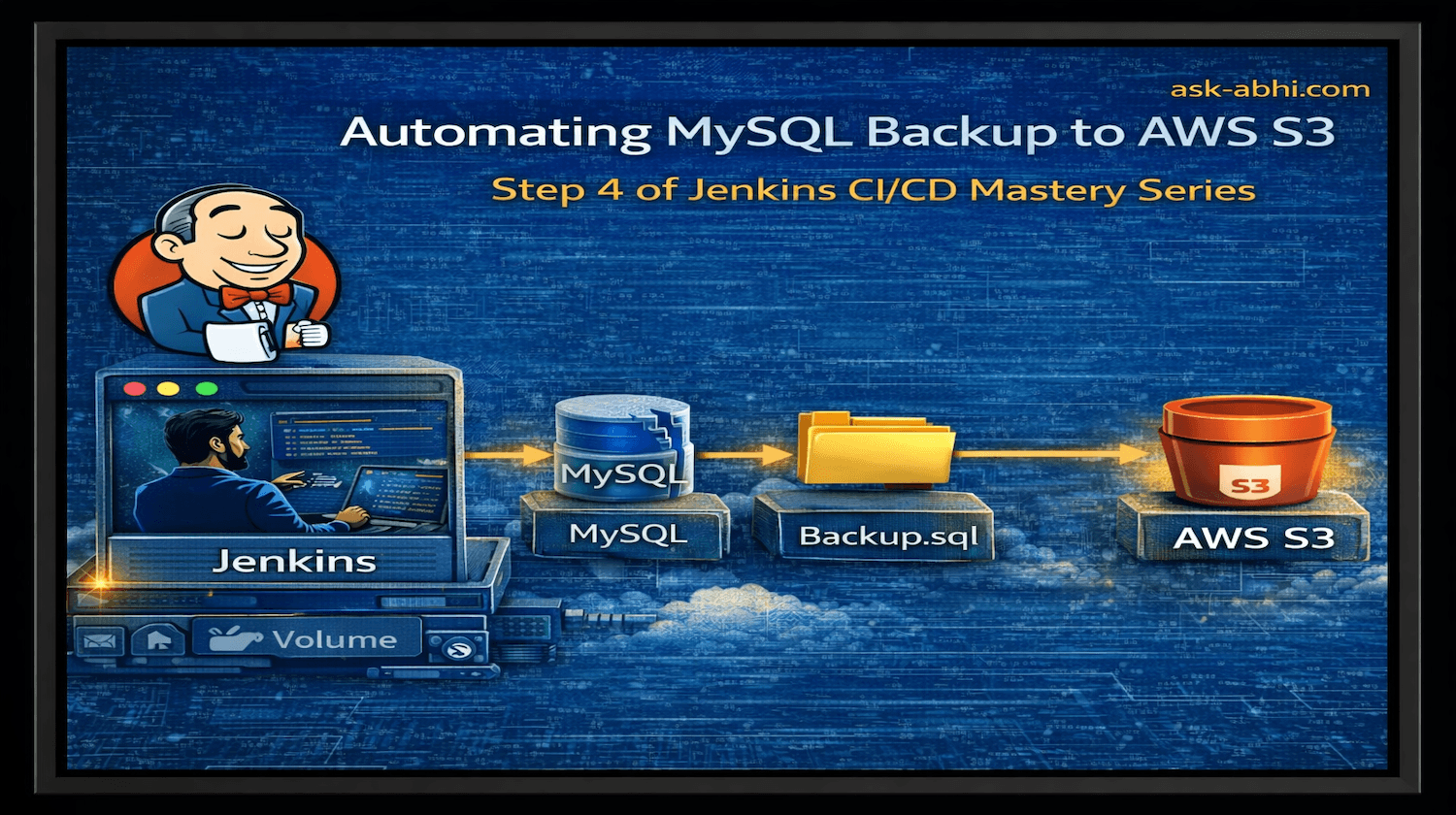

Part 4: Automating MySQL Backup to AWS S3

Real world use case

Jenkins CI/CD Series

Goal

The goal of this project is to build a real-world DevOps automation workflow where Jenkins automatically creates a MySQL database backup and uploads it to Amazon S3.

In this exercise, Jenkins will connect to the remote server created in Part 2, execute a script that performs a mysqldump, and securely upload the backup file to an S3 bucket using AWS CLI.

By the end of this project, you will have a fully automated database backup pipeline controlled by Jenkins.

We can make use of this server to take a MySQL DB backup and upload it to a remote location (S3)

Purpose

The purpose of this exercise is to demonstrate how Jenkins can be used to automate infrastructure maintenance tasks, such as database backups and secure cloud storage operations.

In production environments, database backups are critical for:

Disaster recovery

Data protection

Compliance requirements

Operational resilience

Instead of manually running backup scripts, DevOps teams typically automate this process using CI/CD tools like Jenkins.

Through this project, readers will learn how to:

Use Jenkins to trigger automation scripts

Execute scripts on remote infrastructure

Securely manage credentials

Integrate Jenkins with AWS services

This project simulates a real-world backup automation pipeline commonly used in enterprise infrastructure.

Prerequisites

Before starting this project, ensure the following components are already set up.

Ready to use

Hostand thedirectorystructure to runDockerfilesadocker-compose.yml(Refer to Part 1)An AWS account

Step-by-step implementation

Before we create the "db" container, we need to install useful tools, mainly the MySQL server, client and AWS CLI, on the remote-host container

Pro-Tip: Read Dockerfile carefully

- Go to

/home/jenkins/jenkins_home/centos7$and ModifyDockerfile

FROM rockylinux:9

# Install required packages

RUN dnf install -y \

openssh-server \

passwd \

sudo \

mysql \

unzip \

less \

groff && \

dnf clean all

# Install AWS CLI v2

RUN curl "https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip" -o "awscliv2.zip" && \

unzip awscliv2.zip && \

./aws/install && \

./aws/install --update && \

rm -rf aws awscliv2.zip

# Create user

RUN useradd -m remote_user && \

echo "remote_user:1234" | chpasswd

# Configure SSH directory

RUN mkdir -p /home/remote_user/.ssh

# Copy public SSH key

COPY remote-key.pub /home/remote_user/.ssh/authorized_keys

# Fix SSH permissions

RUN chown -R remote_user:remote_user /home/remote_user/.ssh && \

chmod 700 /home/remote_user/.ssh && \

chmod 600 /home/remote_user/.ssh/authorized_keys

# Enable password authentication for SSH

RUN sed -i 's/#PasswordAuthentication yes/PasswordAuthentication yes/' /etc/ssh/sshd_config

# Generate SSH host keys

RUN ssh-keygen -A

# Expose SSH port

EXPOSE 22

# Start SSH daemon

CMD ["/usr/sbin/sshd","-D"]

- Update

docker-compose.ymlto create a db container (We have it on\jenkins_home\)

Create a container named "db" by pulling image 8.0, its password will be "1234", and volumes will be stored at the path given, and will be using the same existing network to communicate with other containers

services:

jenkins:

container_name: jenkins

image: jenkins/jenkins:lts

ports:

- "8080:8080"

volumes:

- $PWD/jenkins_home:/var/jenkins_home

networks:

- net

remote_host:

container_name: remote-host

image: remote-host

build:

context: centos7

networks:

- net

db_host:

container_name: db

image: mysql:8.0

environment:

MYSQL_ROOT_PASSWORD: 1234

volumes:

- $PWD/db_data:/var/lib/mysql

networks:

- net

networks:

net:

- Build a new image for the container remote-host (SSH server)

docker compose build

- Create db container

docker compose up -d

- Verify the logs, if MySQL is ready

docker logs -f db

- Look at the message shown below

docker ps

- Log in to the db container and verify

# login to container

docker exec -it db bash

# login to mysql

mysql -u root -p

# password is given in docker compose

1234

# check databases

show databases;

- These results should look like this:

- Log in to the

remote-hostand check if the basic MySQL and AWS CLI tools are working

Note - Error are expected, means its installed.

Go back to db container and log in

docker exec -ti db bashCreate a Database by using the below SQL queries

# Create DB

mysql> create databse testdb;

# Change db

mysql> use testdb

# Create DB table

mysql> create table info (name varchar(20), lastname varchar(20), age int(2));

# Add some fake info

mysql> insert into info values ('abhi', 'chougule', 30);

# Vrify

mysql> select * from info

- Look Like below

- Insert some details

Next AWS S3

Create an S3 bucket by logging into AWS

- Create an IAM user with S3 access

- Create a secret access key with the

Command Line Interface

- Manual upload: Go back to the remote-host container and take a backup of MySQL

[IMP] Note - We are taking backup from remote-host bash terminal

mysqldump -u root -h remote-host -p testdb > /tmp/db.sql

- Verify backup

- Configure AWS CLI on the remote-host container to S3 and upload

- Verify on S3

- Let's automate this process of creating a dump and uploading it using Jenkins

Create a script.sh on remote-host under /tmp/script.sh

#!/bin/bash

DATE=$(date +%H-%M-%S)

BACKUP=db-$DATE.sql

DB_HOST=$1

DB_PASSWORD=$2

DB_NAME=$3

AWS_SECRET=$4

BUCKET_NAME=$5

mysqldump -u root -h "\(DB_HOST" -p"\)DB_PASSWORD" "\(DB_NAME" > /tmp/\)BACKUP && \

export AWS_ACCESS_KEY_ID=AKIAQE537I5GMUA722C6 && \

export AWS_SECRET_ACCESS_KEY=$AWS_SECRET && \

echo "uploading your $BACKUP backup" && \

aws s3 cp /tmp/db-\(DATE.sql s3://\)BUCKET_NAME/$BACKUP

Description

DATE: The variable for date will be saved

BACKUP: Name will be derived for SQL dump

Numbering in front of variables: Will decide the script sequence

Where the actions will be performed

Export Access ID is provided, but the Access key will be retrieved from Jenkins credentials

Copy-Paste command

Go to the Jenkins console and create a job to trigger this process

Create secret credentials

Go to Manage Jenkins--> Credentials-->Add credentials--> Secret text

AWS_SECRET_ACCESS_KEY MYSQL_PASSWORD

Create

backup-mysqlfreestylejob and add these detailsSelect:

This Project is parameterized

String parameter:MYSQL_HOST

Default Value:db_hostString Parameter:

DATABASE_NAME

Default Value:testdbString Parameter:

AWS_BUCKET_NAME

Default Value:jenkins-mysql-backup-mylabString Parameter:

DATABASE_NAME

Default Value:testdbEnvironments:

secret text:MYSQL_PASSWORD

Credentials:<MYSQL_PASSWORD>secret text:

AWS_SECRET

Credentials:<AWS_SECRET_ACCESS_KEY>Build Steps:

Select:Execute a shell script on a remote host using SSH

SSH site:remote_user@remote_host:22

Command:/tmp/script.sh \(MYSQL_HOST \)MYSQL_PASSWORD \(DATABASE_NAME \)AWS_SECRET $AWS_BUCKET_NAME

Pro-Tip: The command: sequnce is based on script numbering to call each block from the setup

Note 1 - This step we created SSH site at start of the article

Go toManage Jenkins-->SSH Serverand add details of our SSH serverNote 2- Form the command based on the sequence in script.sh for all the above parameters created here and use the "$" prefix

Here is what I set up

- Run the

Build with Parameters

- Logs

- Verify upload

Done!!!

Conclusion:

In this project, we built a fully automated database backup pipeline using Jenkins.

The workflow included:

Jenkins triggering automation tasks

Remote server executing the backup script

MySQL database dump creation

Secure upload to AWS S3

This setup demonstrates a practical DevOps automation pattern used in production environments, where CI/CD tools manage infrastructure operations such as backups, deployments, and system maintenance.

By automating database backups through Jenkins, organizations can ensure reliable, repeatable, and secure data protection workflows.

🔗 Continue the Series

⬅️ Previous Article: Part 3 Jenkins + SSH Remote Execution

➡️ Next Article: Part 5 Making Jenkins Automation Scalable

⭐ If you found this article useful, follow https://ask-abhi.com for more DevOps tutorials.